I believed OpenAI’s GPT-4o, its main mannequin on the time, can be completely suited to assist. I requested it to create a brief wedding-themed poem, with the constraint that every letter may solely seem a sure variety of occasions so we may be sure groups would be capable of reproduce it with the supplied set of tiles. GPT-4o failed miserably. The mannequin repeatedly insisted that its poem labored throughout the constraints, regardless that it didn’t. It could accurately depend the letters solely after the actual fact, whereas persevering with to ship poems that didn’t match the immediate. With out the time to meticulously craft the verses by hand, we ditched the poem concept and as a substitute challenged company to memorize a collection of shapes produced from coloured tiles. (That ended up being a complete hit with our family and friends, who additionally competed in dodgeball, egg tosses, and seize the flag.)

Nevertheless, final week OpenAI released a brand new mannequin referred to as o1 (beforehand referred to below the code identify “Strawberry” and, before that, Q*) that blows GPT-4o out of the water for this kind of objective.

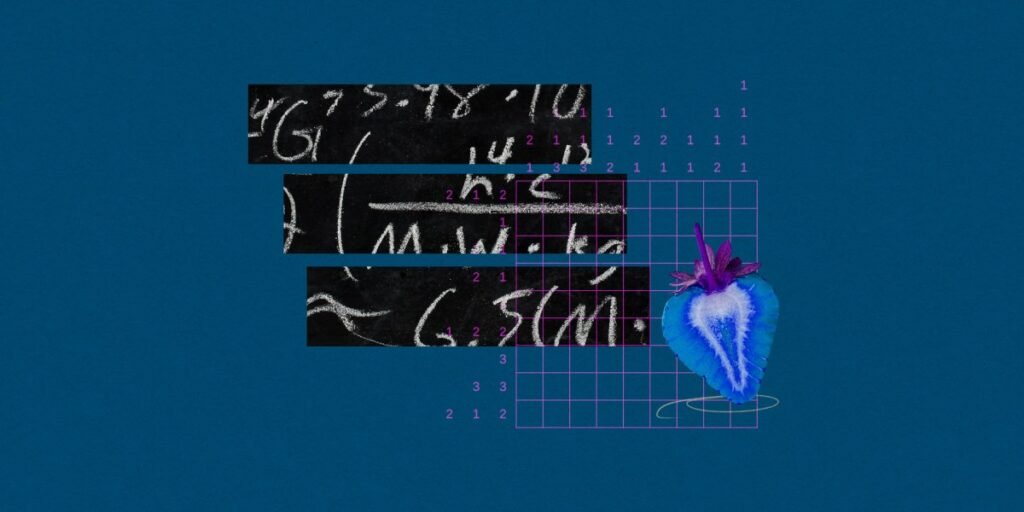

Not like earlier fashions which might be nicely suited to language duties like writing and modifying, OpenAI o1 is targeted on multistep “reasoning,” the kind of course of required for superior arithmetic, coding, or different STEM-based questions. It makes use of a “chain of thought” method, in accordance with OpenAI. “It learns to acknowledge and proper its errors. It learns to interrupt down difficult steps into less complicated ones. It learns to attempt a unique strategy when the present one isn’t working,” the corporate wrote in a weblog publish on its web site.

OpenAI’s exams level to resounding success. The mannequin ranks within the 89th percentile on questions from the aggressive coding group Codeforces and can be among the many prime 500 highschool college students within the USA Math Olympiad, which covers geometry, quantity principle, and different math subjects. The mannequin can also be skilled to reply PhD-level questions in topics starting from astrophysics to natural chemistry.

In math olympiad questions, the brand new mannequin is 83.3% correct, versus 13.4% for GPT-4o. Within the PhD-level questions, it averaged 78% accuracy, in contrast with 69.7% from human consultants and 56.1% from GPT-4o. (In mild of those accomplishments, it’s unsurprising the brand new mannequin was fairly good at writing a poem for our nuptial video games, although nonetheless not excellent; it used extra Ts and Ss than instructed to.)

So why does this matter? The majority of LLM progress till now has been language-driven, leading to chatbots or voice assistants that may interpret, analyze, and generate phrases. However along with getting a number of details improper, such LLMs have did not show the sorts of expertise required to resolve essential issues in fields like drug discovery, supplies science, coding, or physics. OpenAI’s o1 is among the first indicators that LLMs may quickly change into genuinely useful companions to human researchers in these fields.

It’s an enormous deal as a result of it brings “chain-of-thought” reasoning in an AI mannequin to a mass viewers, says Matt Welsh, an AI researcher and founding father of the LLM startup Fixie.

“The reasoning skills are straight within the mannequin, quite than one having to make use of separate instruments to realize related outcomes. My expectation is that it’s going to increase the bar for what individuals anticipate AI fashions to have the ability to do,” Welsh says.