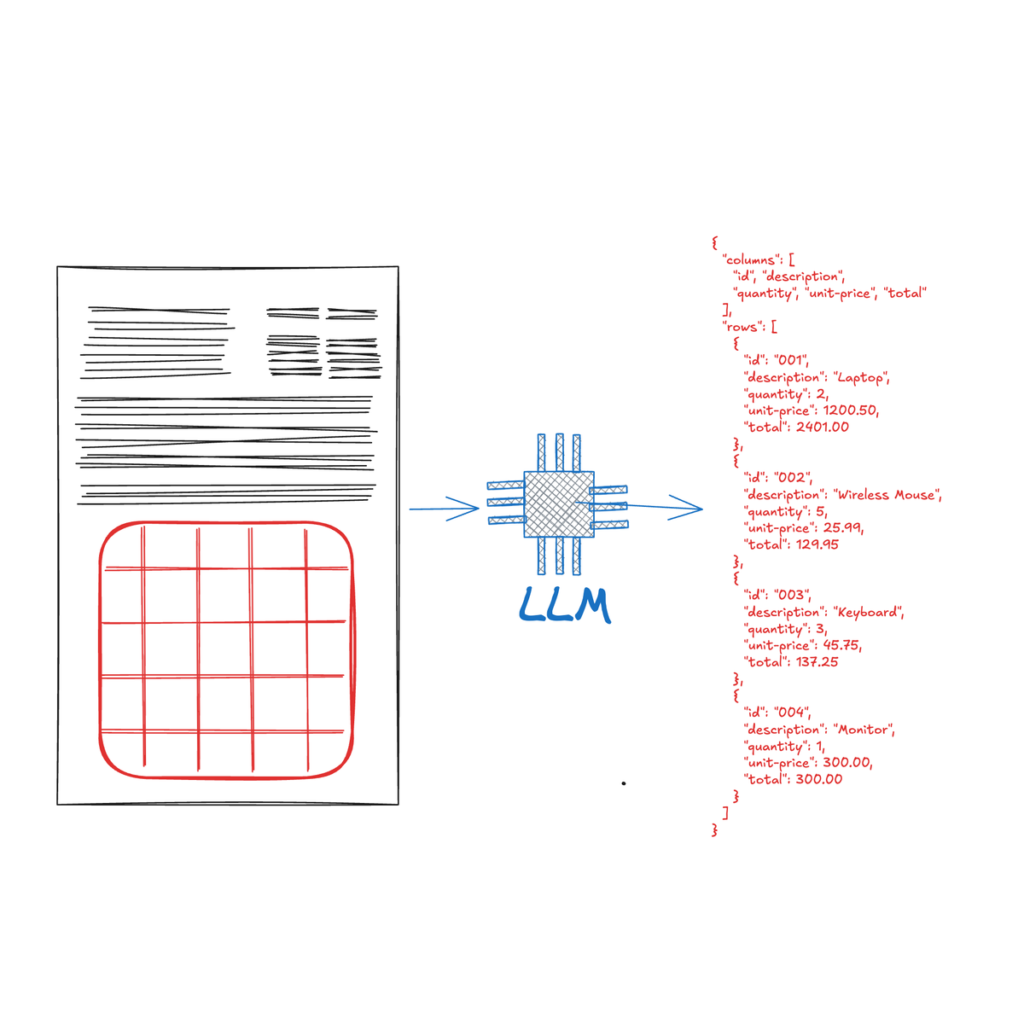

Image this – you’re drowning in a sea of PDFs, spreadsheets, and scanned paperwork, trying to find that one piece of information trapped someplace in a fancy desk. From monetary experiences and analysis papers, to resumes and invoices, these paperwork can comprise advanced tables with a wealth of structured knowledge that must be shortly and precisely extracted. Historically, extracting this structured info has been a fancy job in knowledge processing. Nevertheless, with the rise of the Giant Language Mannequin (LLM), we now have one other instrument with the potential to unlock intricate tabular knowledge.

Tables are ubiquitous, holding a big quantity of data packed in a dense format. The accuracy of an excellent desk parser can pave the best way to automation of numerous workflows in a enterprise.

This complete information will take you thru the evolution of desk extraction strategies, from conventional strategies to the cutting-edge use of LLMs. This is what you’ll study:

- An summary of desk extraction and it is innate challenges

- Conventional desk extraction strategies and their limitations

- How LLMs are being utilized to enhance desk extraction accuracy

- Sensible insights into implementing LLM-based desk extraction, together with code examples

- A deep dive into Nanonets’ strategy to desk extraction utilizing LLMs

- The professionals and cons of utilizing LLMs for desk extraction

- Future traits and potential developments on this quickly evolving discipline

Desk extraction refers back to the strategy of figuring out, and extracting structured knowledge from tables embedded inside paperwork. The first objective of desk extraction is to transform the info inside embedded tables right into a structured format (e.g., CSV, Excel, Markdown, JSON) that precisely displays the desk’s rows, columns, and cell contents. This structured knowledge can then be simply analyzed, manipulated, and built-in into numerous knowledge processing workflows.

Desk extraction has wide-ranging functions throughout numerous industries, listed here are a couple of examples of use-cases the place changing unstructured tabular knowledge into actionable insights is essential:

- Monetary Evaluation: Desk extraction is used to course of monetary experiences, stability sheets, and earnings statements. This permits fast compilation of monetary metrics for evaluation, forecasting, and regulatory reporting.

- Scientific Analysis: Researchers use desk extraction to collate experimental outcomes from a number of revealed papers.

- Enterprise Intelligence: Corporations extract tabular knowledge from gross sales experiences, market analysis, and competitor evaluation paperwork. This permits for pattern evaluation, efficiency monitoring, and knowledgeable decision-making.

- Healthcare: Desk extraction helps in processing affected person knowledge, lab outcomes, and medical trial outcomes from medical paperwork.

- Authorized Doc Processing: Legislation companies and authorized departments use desk extraction to investigate contract phrases, patent claims, and case regulation statistics.

- Authorities and Public Coverage: Desk extraction is utilized to census knowledge, price range experiences, and election outcomes. This helps demographic evaluation, coverage planning, and public administration.

Tables are very versatile and are usable in so many domains. This flexibility additionally brings its personal set of challenges that are mentioned under.

- Various Codecs: Tables are available numerous codecs, from easy grids to advanced nested constructions.

- Context Dependency: Understanding a desk usually requires comprehending the encompassing textual content and doc construction.

- Knowledge High quality: Coping with imperfect inputs, akin to low-resolution scans, poorly formatted paperwork, or non-textual components.

- Diverse Codecs: Your extraction pipeline ought to be capable to deal with a number of enter file codecs.

- A number of Tables per Doc/Picture: Some paperwork would require a number of pictures to be extracted individually.

- Inconsistent Layouts: Tables in real-world paperwork hardly ever adhere to a regular format, making rule-based extraction difficult:

- Complicated Cell Constructions: Cells usually span a number of rows or columns, creating irregular grids.

- Diverse Content material: Cells might comprise numerous components, from easy textual content to nested tables, paragraphs, or lists.

- Hierarchical Data: Multi-level headers and subheaders create advanced knowledge relationships.

- Context-Dependent Interpretation: Cell meanings might depend on surrounding cells or exterior references.

- Inconsistent Formatting: Various fonts, colours, and border kinds convey extra which means.

- Combined Knowledge Sorts: Tables can mix textual content, numbers, and graphics inside a single construction.

These components create distinctive layouts that resist standardized parsing, necessitating extra versatile, context-aware extraction strategies.

Conventional strategies, together with rule-based programs, and machine studying approaches, have made strides in addressing these challenges. Nevertheless, they will fall brief when confronted with the sheer selection and complexity of real-world tables.

Giant Language Fashions (LLMs) symbolize a big development in synthetic intelligence, notably in pure language processing. These transformer based mostly deep neural networks, educated on huge quantities of information, can carry out a variety of pure language processing (NLP) duties, akin to translation, summarization, and sentiment evaluation. Current developments have expanded LLMs past textual content, enabling them to course of numerous knowledge varieties together with pictures, audio, and video, thus reaching multimodal capabilities that mimic human-like notion.

In desk extraction, LLMs are being leveraged to course of advanced tabular knowledge. In contrast to conventional strategies that always wrestle with various desk codecs in unstructured and semi-structured paperwork like PDFs, LLMs leverage their innate contextual understanding and sample recognition talents to navigate intricate desk constructions extra successfully. Their multimodal capabilities enable for complete interpretation of each textual and visible components inside paperwork, enabling them to extra precisely extract and arrange info. The query is, are LLMs truly a dependable technique for persistently and precisely extracting tables from paperwork? Earlier than we reply this query, let’s perceive how desk info was extracted utilizing older strategies.

Desk extraction relied totally on three important approaches:

- rule-based programs,

- conventional machine studying strategies, and

- laptop imaginative and prescient strategies

Every of those approaches has its personal strengths and limitations, which have formed the evolution of desk extraction strategies.

Rule-based Approaches:

Rule-based approaches had been among the many earliest strategies used for desk detection and extraction. These programs depend on extracting textual content from OCR with bounding containers for every phrase adopted by a predefined units of guidelines and heuristics to establish and extract tabular knowledge from paperwork.

How Rule-based Methods Work

- Format Evaluation: These programs sometimes begin by analyzing the doc format, in search of visible cues that point out the presence of a desk, akin to grid strains or aligned textual content.

- Sample Recognition: They use predefined patterns to establish desk constructions, akin to common spacing between columns or constant knowledge codecs inside cells.

- Cell Extraction: As soon as a desk is recognized, rule-based programs decide the boundaries of every cell based mostly on the detected format, akin to grid strains or constant spacing, after which seize the info inside these boundaries.

This strategy can work effectively for paperwork with extremely constant and predictable codecs, however will start to wrestle with extra advanced or irregular tables.

Benefits of Rule-based Approaches

- Interpretability: The principles are sometimes simple and simple for people to know and modify.

- Precision: For well-defined desk codecs, rule-based programs can obtain excessive accuracy.

Limitations of Rule-based Approaches

- Lack of Flexibility: Rule-based programs wrestle to generalize extraction on tables that deviate from anticipated codecs or lack clear visible cues. This could restrict the system’s applicability throughout totally different domains.

- Complexity in Rule Creation: As desk codecs change into extra numerous, the variety of guidelines required grows exponentially, making the system troublesome to take care of.

- Problem with Unstructured Knowledge: These programs usually fail when coping with tables embedded in unstructured textual content or with inconsistent formatting.

Machine Studying Approaches

As the constraints of rule-based programs grew to become obvious, researchers turned to machine studying strategies to enhance desk extraction capabilities. A typical machine studying workflow would additionally depend on OCR adopted by ML fashions on prime of phrases and word-locations.

Frequent Machine Studying Methods for Desk Extraction

- Help Vector Machines (SVM): Used for classifying desk areas and particular person cells based mostly on options like textual content alignment, spacing, and formatting.

- Random Forests: Employed for feature-based desk detection and construction recognition, leveraging resolution bushes to establish numerous desk layouts and components.

- Conditional Random Fields (CRF): Utilized to mannequin the sequential nature of desk rows and columns. CRFs are notably efficient in capturing dependencies between adjoining cells.

- Neural Networks: Early functions of neural networks for desk construction recognition and cell classification. Newer approaches embrace deep studying fashions like Convolutional Neural Networks (CNNs) for image-based desk detection and Recurrent Neural Networks (RNNs) for understanding relationships between cells in a desk, we are going to cowl these in depth within the subsequent part.

Benefits of Machine Studying Approaches

- Improved Flexibility: ML fashions can study to acknowledge a greater diversity of desk codecs in comparison with rule-based programs.

- Adaptability: With correct coaching knowledge, ML fashions could be tailored to new domains extra simply than rewriting guidelines.

Challenges in Machine Studying Approaches

- Knowledge Dependency: The efficiency of ML fashions closely depends upon the standard and amount of coaching knowledge, which could be costly and time-consuming to gather and label.

- Characteristic Engineering: Conventional ML approaches usually require cautious characteristic engineering, which could be advanced for numerous desk codecs.

- Scalability Points: Because the number of desk codecs will increase, the fashions might require frequent retraining and updating to take care of accuracy.

- Contextual Understanding: Many conventional ML fashions wrestle with understanding the context surrounding tables, which is commonly essential for proper interpretation.

Deep Studying Approaches

With the rise of laptop imaginative and prescient during the last decade there have been a number of deep studying architectures that attempt to resolve desk extraction. Sometimes, these fashions are some variation of object-detection fashions the place the objects that being detected are “tables”, “columns”, “rows”, “cells” and “merged cells”.

A number of the well-known architectures on this area are

- Desk Transformers – A variation of DETR that has been educated solely for Desk detection and recognition. This identified for its simplicity and reliability on numerous number of pictures.

- MuTabNet – One of many prime performers on PubTabNet dataset, this mannequin has 3 elements, CNN spine, HTML decoder and a Cell decoder. Dedicating specialised fashions for particular duties is one in all it is causes for such efficiency

- TableMaster is another transformer based mostly mannequin that makes use of 4 totally different duties in synergy to unravel desk extraction. Construction Recognition, Line Detection, Field Task and Matching Pipeline.

No matter the mannequin, all these architectures are accountable for creating the bounding containers and depend on OCR for putting the textual content in the fitting containers. On prime of being extraordinarily compute intensive and time consuming, all of the drawbacks of conventional machine studying fashions nonetheless apply right here with the one added benefit of not having to do any characteristic engineering.

Whereas rule-based, conventional machine studying and deep-learning approaches have made important contributions to desk extraction, they usually fall brief when confronted with the large selection and complexity of real-world paperwork. These limitations have paved the best way for extra superior strategies, together with the applying of Giant Language Fashions, which we are going to discover within the subsequent part.

Conventional desk extraction approaches work effectively in lots of circumstances, however there is no such thing as a doubt of the affect of LLMs on the area. As mentioned above, whereas LLMs had been initially designed for pure language processing duties, they’ve demonstrated sturdy capabilities in understanding and processing tabular knowledge. This part introduces key LLMs and explores how they’re advancing the state-of-the-art (SOTA) in desk extraction.

A number of the most distinguished LLMs embrace:

- GPT (Generative Pre-trained Transformer): Developed by OpenAI, GPT fashions (akin to GPT-4 and GPT-4o) are identified for his or her capability to generate coherent and contextually related textual content. They’ll perceive and course of a variety of language duties, together with desk interpretation.

- BERT (Bidirectional Encoder Representations from Transformers): Created by Google, BERT excels at understanding the context of phrases in textual content. Its bidirectional coaching permits it to understand the total context of a phrase by trying on the phrases that come earlier than and after it.

- T5 (Textual content-to-Textual content Switch Transformer): Developed by Google, T5 treats each NLP job as a “text-to-text” downside, which permits it to be utilized to a variety of duties.

- LLaMA (Giant Language Mannequin Meta AI): Created by Meta AI, LLaMA is designed to be extra environment friendly and accessible (open supply) than another bigger fashions. It has proven sturdy efficiency throughout numerous duties and has spawned quite a few fine-tuned variants.

- Gemini: Developed by Google, Gemini is a multimodal AI mannequin able to processing and understanding textual content, pictures, video, and audio. Its capability to work throughout totally different knowledge varieties makes it notably attention-grabbing for advanced desk extraction duties.

- Claude: Created by Anthropic, Claude is understood for its sturdy language understanding and technology capabilities. It has been designed with a deal with security and moral issues, which could be notably priceless when dealing with delicate knowledge in tables.

These LLMs symbolize the reducing fringe of AI language know-how, every bringing distinctive strengths to the desk extraction job. Their superior capabilities in understanding context, processing a number of knowledge varieties, and producing human-like responses are pushing the boundaries of what is potential in automated desk extraction.

LLM Capabilities in Understanding and Processing Tabular Knowledge

LLMs have proven spectacular capabilities in dealing with tabular knowledge, providing a number of benefits over conventional strategies:

- Contextual Understanding: LLMs can perceive the context during which a desk seems, together with the encompassing textual content. This permits for extra correct interpretation of desk contents and construction.

- Versatile Construction Recognition: These fashions can acknowledge and adapt to numerous desk constructions together with advanced, unpredictable, and non-standard layouts with extra flexibility than rule-based programs. Consider merged cells or nested tables. Take into account that whereas they’re fitter for advanced tables than conventional strategies, LLMs aren’t a silver bullet and nonetheless have inherent challenges that will likely be mentioned later on this paper.

- Pure Language Interplay: LLMs can reply questions on desk contents in pure language, making knowledge extraction extra intuitive and user-friendly.

- Knowledge Imputation: In instances the place desk knowledge is incomplete or unclear, LLMs can typically infer lacking info based mostly on context and common information. This nevertheless will should be fastidiously monitored as there’s danger of hallucination (we are going to talk about this in depth in a while!)

- Multimodal Understanding: Superior LLMs can course of each textual content and picture inputs, permitting them to extract tables from numerous doc codecs, together with scanned pictures. Imaginative and prescient Language Fashions (VLMs) can be utilized to establish and extract tables and figures from paperwork.

- Adaptability: LLMs could be fine-tuned on particular domains or desk varieties, permitting them to specialise in explicit areas with out dropping their common capabilities.

Regardless of their superior capabilities, LLMs face a number of challenges in desk extraction. Regardless of their capability to extract extra advanced and unpredictable tables than conventional OCR strategies, LLMs face a number of limitations.

- Repeatability: One key problem in utilizing LLMs for desk extraction is the shortage of repeatability of their outputs. In contrast to rule-based programs or conventional OCR strategies, LLMs might produce barely totally different outcomes even when processing the identical enter a number of instances. This variability can hinder consistency in functions requiring exact, reproducible desk extraction.

- Black Field: LLMs function as black-box programs, which means that their decision-making course of will not be simply interpretable. This lack of transparency complicates error evaluation, as customers can’t hint how or why the mannequin reached a specific output. In desk extraction, this opacity could be problematic, particularly when coping with delicate knowledge the place accountability and understanding of the mannequin’s conduct are important.

- High-quality Tuning: In some instances, fine-tuning could also be required to carry out efficient desk extraction. High-quality-tuning is a useful resource intensive job that requires substantial quantities of labeled examples, computational energy, and experience.

- Area Specificity: Normally, LLMs are versatile, however they will wrestle with domain-specific tables that comprise business jargon or extremely specialised content material. In these instances, there’s doubtless a must fine-tune the mannequin to achieve a greater contextual understanding of the area at hand.

- Hallucination: A important concern distinctive to LLMs is the danger of hallucination — the technology of believable however incorrect knowledge. In desk extraction, this might manifest as inventing desk cells, misinterpreting column relationships, or fabricating knowledge to fill perceived gaps. Such hallucinations could be notably problematic as they will not be instantly apparent, are introduced to the consumer confidently, and will result in important errors in downstream knowledge evaluation. You will note some examples of the LLM taking inventive management within the examples within the following part whereas creating column names.

- Scalability: LLMs face challenges in scalability when dealing with giant datasets. As the amount of information grows, so do the computational calls for, which might result in slower processing and efficiency bottlenecks.

- Value: Deploying LLMs for desk extraction could be costly. The prices of cloud infrastructure, GPUs, and power consumption can add up shortly, making LLMs a expensive choice in comparison with extra conventional strategies.

- Privateness: Utilizing LLMs for desk extraction usually entails processing delicate knowledge, which might increase privateness considerations. Many LLMs depend on cloud-based platforms, making it difficult to make sure compliance with knowledge safety laws and safeguard delicate info from potential safety dangers. As with all AI know-how, dealing with probably delicate info appropriately, guaranteeing knowledge privateness and addressing moral issues, together with bias mitigation, are paramount.

Given the benefits in addition to drawbacks, neighborhood has found out the next methods, LLMs can be utilized in a wide range of methods to extract tabular knowledge from paperwork:

- Use OCR strategies to extract paperwork into machine readable codecs, then current to LLM.

- In case of VLMs, we will moreover go a picture of the doc immediately

LLMs vs Conventional Methods

On the subject of doc processing, selecting between conventional strategies and OCR based mostly LLMs depends upon the precise necessities of the duty. Let’s have a look at a number of facets to judge when making a call:

In apply, programs make use of the strategy of utilizing OCR for preliminary textual content extraction and LLMs for deeper evaluation and interpretation to attain optimum leads to doc processing duties.

Evaluating the efficiency of LLMs in desk extraction is a fancy job as a result of number of desk codecs, doc varieties, and extraction necessities. This is an summary of frequent benchmarking approaches and metrics:

Frequent Benchmarking Datasets

- SciTSR (Scientific Desk Construction Recognition Dataset): Comprises tables from scientific papers, difficult on account of their advanced constructions.

- TableBank: A big-scale dataset with tables from scientific papers and monetary experiences.

- PubTabNet: A big dataset of tables from scientific publications, helpful for each construction recognition and content material extraction.

- ICDAR (Worldwide Convention on Doc Evaluation and Recognition) datasets: Numerous competitors datasets specializing in doc evaluation, together with desk extraction.

- Vision Document Retrieval (ViDoRe): Benchmark: Targeted on doc retrieval efficiency analysis on visually wealthy paperwork holding tables, pictures, and figures.

Key Efficiency Metrics

Evaluating the efficiency of desk extraction is a fancy job, as efficiency not solely entails extracting the values held inside a desk, but additionally the construction of the desk. Parts that may be evaluated embrace cell content material, in addition to structural components like cell topology (format), and site.

- Precision: The proportion of accurately extracted desk components out of all extracted components.

- Recall: The proportion of accurately extracted desk components out of all precise desk components within the doc.

- F1 Rating: The harmonic imply of precision and recall, offering a balanced measure of efficiency.

- TEDS (Tree Edit Distance based mostly Similarity): A metric particularly designed to judge the accuracy of desk extraction duties. It measures the similarity between the extracted desk’s construction and the bottom reality desk by calculating the minimal variety of operations (insertions, deletions, or substitutions) required to remodel one tree illustration of a desk into one other.

- GriTS (Grid Desk Similarity): GriTS is a desk construction recognition (TSR) analysis framework for measuring the correctness of extracted desk topology, content material, and site. It makes use of metrics like precision and recall, and calculates partial correctness by scoring the similarity between predicted and precise desk constructions, as an alternative of requiring a precise match.

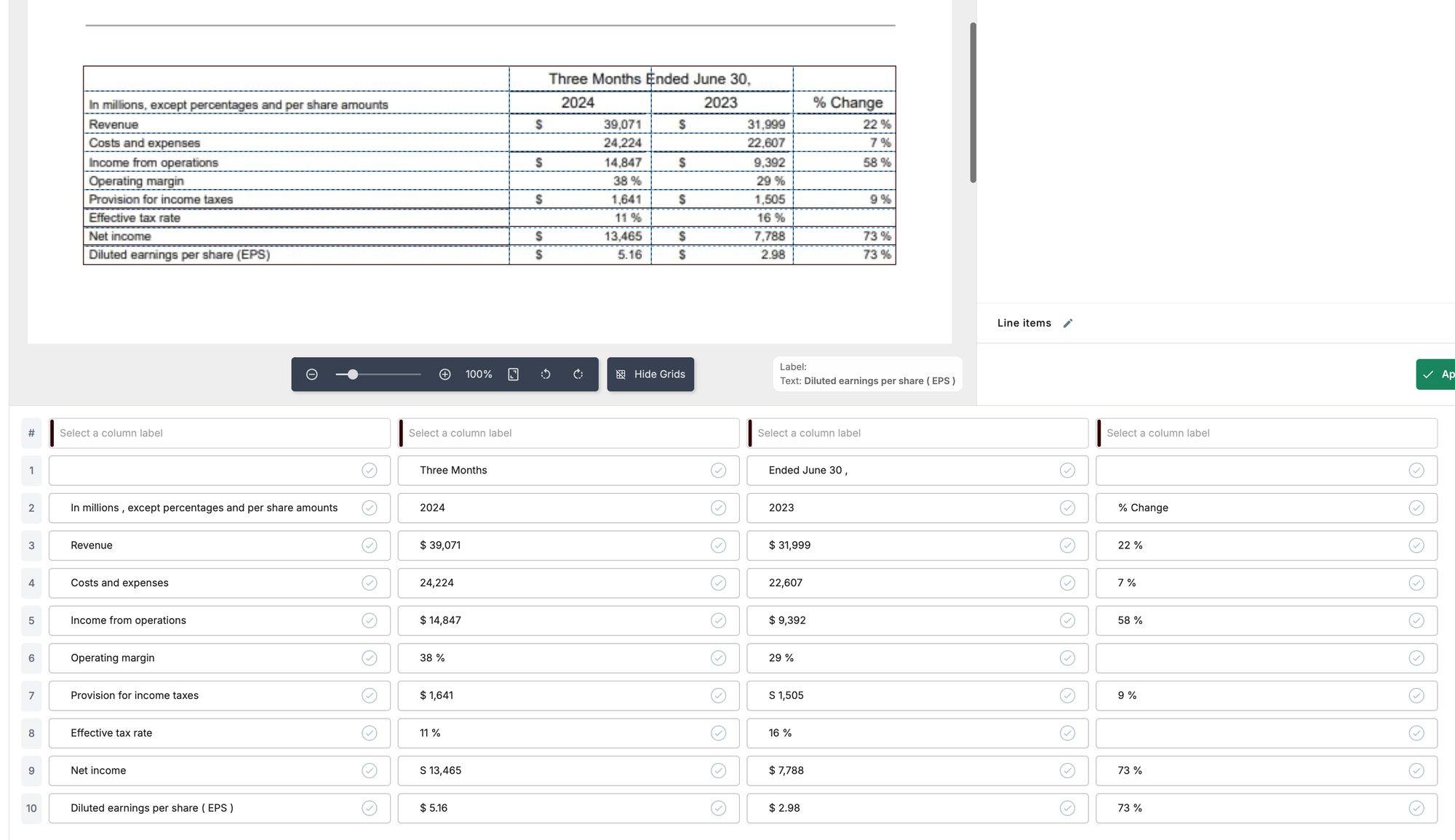

On this part, we are going to code the implementation of desk extraction utilizing an LLM. We are going to extract a desk from the primary web page of a Meta earnings report as seen right here:

This course of will cowl the next key steps:

- OCR

- Name LLM APIs to extract tables

- Parsing the APIs output

- Lastly, reviewing the outcome

1. Move Doc to OCR Engine like Nanonets:

import requests

import base64

import json

url = "https://app.nanonets.com/api/v2/OCR/FullText"

payload = {"urls": ["MY_IMAGE_URL"]}

recordsdata = [

(

"file",

("FILE_NAME", open("/content/meta_table_image.png", "rb"), "application/pdf"),

)

]

headers = {}

response = requests.request(

"POST",

url,

headers=headers,

knowledge=payload,

recordsdata=recordsdata,

auth=requests.auth.HTTPBasicAuth("XXX", ""),

)

def extract_words_text(knowledge):

# Parse the JSON-like string

parsed_data = json.masses(knowledge)

# Navigate to the 'phrases' array

phrases = parsed_data["results"][0]["page_data"][0]["words"]

# Extract solely the 'textual content' discipline from every phrase and be part of them

text_only = " ".be part of(phrase["text"] for phrase in phrases)

return text_only

extracted_text = extract_words_text(response.textual content)

print(extracted_text)

OCR Outcome:

FACEBOOK Meta Studies Second Quarter 2024 Outcomes MENLO PARK Calif. July 31.2024 /PRNewswire/ Meta Platforms Inc (Nasdag METAX in the present day reported monetary outcomes for the quarter ended June 30, 2024 "We had sturdy quarter and Meta Al is on observe to be probably the most used Al assistant on the planet by the tip of the 12 months stated Mark Zuckerberg Meta founder and CEC "We have launched the primary frontier-level open supply Al mannequin we proceed to see good traction with our Ray-Ban Meta Al glasses and we're driving good development throughout our apps Second Quarter 2024 Monetary Highlights Three Months Ended June 30 In hundreds of thousands excent percentages and ner share quantities 2024 2023 % Change Income 39.071 31.999 22 Prices and bills 24.224 22.607 7% Revenue from onerations 14.847 9302 58 Working margin 38 29 Provision for earnings taxes 1.64 1505 0.0 Efficient tax charge 11 16 % Internet earnings 13.465 7.789 73 Diluted earnings per share (FPS 5.16 2.0 73 Second Quarter 2024 Operational and Different Monetary Highlights Household every day lively individuals (DAPY DAP was 3.27 billion on common for June 2024, a rise of seven% 12 months -over vear Advert impressions Advert impressions delivered throughout our Household of Apps elevated by 10% 12 months -over-vear Common value per advert Common value per advert elevated by 10% vear -over-year Income Complete income was $39.07 billion a rise of twenty-two% year-over -year Income or a continuingDialogue: The result’s formatted as a protracted string of textual content, and whereas general the accuracy is truthful, there are some phrases and numbers that had been extracted incorrectly. This highlights one space the place utilizing LLMs to course of this extraction might be helpful, because the LLM can use surrounding context to know the textual content even with the phrases which are extracted incorrectly. Take into account that if there are points with the OCR outcomes of numeric content material in tables, it’s unlikely the LLM might repair this – because of this we should always fastidiously verify the output of any OCR system. An instance on this case is among the precise desk values ‘9,392’ was extracted incorrectly as ‘9302’.

2. Ship extracted textual content to LLMs and parse the output:

Now that we’ve got our textual content extracted utilizing OCR, let’s go it to a number of totally different LLMs, instructing them to extract any tables detected inside the textual content into Markdown format.

A be aware on immediate engineering: When testing LLM desk extraction, it’s potential that immediate engineering might enhance your extraction. Other than tweaking your immediate to extend accuracy, you can give customized directions for instance extracting the desk into any format (Markdown, JSON, HTML, and so forth), and to provide an outline of every column inside the desk based mostly on surrounding textual content and the context of the doc.

OpenAI GPT-4:

%pip set up openai

from openai import OpenAI

# Set your OpenAI API key

shopper = OpenAI(api_key='OpenAI_API_KEY')

def extract_table(extracted_text):

response = shopper.chat.completions.create(

mannequin="gpt-4o",

messages=[

{"role": "system", "content": "You are a helpful assistant that extracts table data into Markdown format."},

{"role": "user", "content": f"Here is text that contains a table or multiple tables:n{extracted_text}nnPlease extract the table."}

]

)

return response.decisions[0].message.content material

extract_table(extracted_text)Outcomes:

Dialogue: The values extracted from the textual content are positioned into the desk accurately and the final construction of the desk is consultant. The cells that ought to not have a price inside them accurately have a ‘-’. Nevertheless, there are a couple of attention-grabbing phenomena. Firstly, the LLM gave the primary column the identify ‘Monetary Metrics’, which isn’t within the authentic doc. It additionally appended ‘(in hundreds of thousands’ and (%) onto a number of monetary metric names. These additions make sense inside the context, however it’s not a precise extraction. Secondly, the column identify ‘Three Months Ended June 30’ ought to span throughout each 2024 and 2023.

Google gemini-pro:

import google.generativeai as genai

# Set your Gemini API key

genai.configure(api_key="Your_Google_AI_API_KEY")

def extract_table(extracted_text):

# Arrange the mannequin

mannequin = genai.GenerativeModel("gemini-pro")

# Create the immediate

immediate = f"""Right here is textual content that incorporates a desk or a number of tables:

{extracted_text}

Please extract the desk and format it in Markdown."""

# Generate the response

response = mannequin.generate_content(immediate)

# Return the generated content material

return response.textual content

outcome = extract_table(extracted_text)

print(outcome)

Outcome:

Dialogue: Once more, the extracted values are within the right locations. The LLM created some column names together with ‘Class’, ‘Q2 2024’, and ‘Q2 2023’, whereas leaving out ‘Three Months Ended June 30’. Gemini determined to place ‘n/a’ in cells that had no knowledge, quite than ‘-’. General the extraction seems to be good in content material and construction based mostly on the context of the doc, however in the event you had been in search of a precise extraction, this isn’t actual.

Mistral-Nemo-Instruct

import requests

def query_huggingface_api(immediate, model_name="mistralai/Mistral-Nemo-Instruct-2407"):

API_URL = f"https://api-inference.huggingface.co/fashions/{model_name}"

headers = {"Authorization": f"Bearer YOUR_HF_TOKEN"}

payload = {

"inputs": immediate,

"parameters": {

"max_new_tokens": 1024,

"temperature": 0.01, # low temperature, cut back creativity for extraction

},

}

response = requests.submit(API_URL, headers=headers, json=payload)

return response.json()

immediate = f"Right here is textual content that incorporates a desk or a number of tables:n{extracted_text}nnPlease extract the desk in Markdown format."

outcome = query_huggingface_api(immediate)

print(outcome)

# Extracting the generated textual content

if isinstance(outcome, checklist) and len(outcome) > 0 and "generated_text" in outcome[0]:

generated_text = outcome[0]["generated_text"]

print("nGenerated Textual content:", generated_text)

else:

print("nError: Unable to extract generated textual content.")

Outcome:

Dialogue: Mistral-Nemo-Instruct, is a much less highly effective LLM than GPT-4o or Gemini and we see that the extracted desk is much less correct. The unique rows within the desk are represented effectively, however the LLM interpreted the bullet factors on the backside of the doc web page to be part of the desk as effectively, which shouldn’t be included.

Immediate Engineering

Let’s do some immediate engineering to see if we will enhance this extraction:

immediate = f"Right here is textual content that incorporates a desk or a number of tables:n{extracted_text}nnPlease extract the desk 'Second Quarter 2024 Monetary Highlights' in Markdown format. Be sure to solely extract tables, not bullet factors."

outcome = query_huggingface_api(immediate)Outcome:

Dialogue: Right here, we engineer the immediate to specify the title of the desk we would like extracted, and remind the mannequin to solely extract tables, not bullet factors. The outcomes are considerably improved from the preliminary immediate. This exhibits we will use immediate engineering to enhance outcomes, even with smaller fashions.

Nanonets

With a couple of clicks on the web site and inside a minute, the writer might extract all the info. The UI provides the availability to confirm and proper the outputs if wanted. On this case there was no want for corrections.

Blurry Picture Demonstration

Subsequent, we are going to attempt to extract a desk out of a decrease high quality scanned document. This time we are going to use the Gemini pipeline carried out above and see the way it does:

Outcome:

Dialogue: The extraction was not correct in any respect! It appears that evidently the low high quality of the scan has a drastic affect on the LLMs capability to extract the embedded components. What would occur if we zoomed in on the desk?

Zoomed In Blurry Desk

Outcome:

Dialogue: Nonetheless, this technique falls brief, the outcomes are barely improved however nonetheless fairly inaccurate. The issue is we’re passing the info from the unique doc by way of so many steps, OCR, to immediate engineering, to LLM extraction, it’s troublesome to make sure a top quality extraction.

Takeaways:

- LLMs like GPT-4o, Gemini, and Mistral can be utilized to extract tables from OCR extractions, with the power to output in numerous codecs akin to Markdown or JSON.

- The accuracy of the LLM extracted desk relies upon closely on the standard of the OCR textual content extraction.

- The flexibleness to provide directions to the LLM on methods to extract and format the desk is one benefit over conventional desk extraction strategies.

- LLM-based extraction could be correct in lots of instances, however there is no assure of consistency throughout a number of runs. The outcomes might range barely every time.

- The LLM typically makes interpretations or additions that, whereas logical in context, will not be actual reproductions of the unique desk. For instance, it would create column names that weren’t within the authentic desk.

- The standard and format of the enter picture considerably affect the OCR course of and LLM’s extraction accuracy.

- Complicated desk constructions (e.g., multi-line cells) can confuse the LLM, resulting in incorrect extractions.

- LLMs can deal with a number of tables in a single picture, however the accuracy might range relying on the standard of the OCR step.

- Whereas LLMs could be efficient for desk extraction, they act as a “black field,” making it troublesome to foretell or management their actual conduct.

- The strategy requires cautious immediate engineering and probably some pre-processing of pictures (like zooming in on tables) to attain optimum outcomes.

- This technique of desk extraction utilizing OCR and LLMs might be notably helpful for functions the place flexibility and dealing with of varied desk codecs are required, however will not be supreme for eventualities demanding 100% consistency and accuracy, or low high quality doc picture.

Imaginative and prescient Language Fashions (VLMs)

Imaginative and prescient Language Fashions (VLMs) are generative AI fashions which are educated on pictures in addition to textual content and are thought-about multimodal – this implies we will ship a picture of a doc on to a VLM for extraction and analytics. Whereas OCR strategies carried out above are helpful for standardized, constant, and clear doc extraction – the power to go a picture of a doc on to the LLM might probably enhance the outcomes as there is no such thing as a must depend on the accuracy of OCR transcriptions.

Let’s take the instance we carried out on the blurry picture above, however go it straight to the mannequin quite than undergo the OCR step first. On this case we are going to use the gemini-1.5-flash VLM mannequin:

Zoomed In Blurry Desk:

Gemini-1.5-flash implementation:

from PIL import Picture

def extract_table(image_path):

# Arrange the mannequin

mannequin = genai.GenerativeModel("gemini-1.5-flash")

picture = Picture.open(image_path)

# Create the immediate

immediate = f"""Right here is textual content that incorporates a desk or a number of tables - Please extract the desk and format it in Markdown."""

# Generate the response

response = mannequin.generate_content([prompt, image])

# Return the generated content material

return response.textual content

outcome = extract_table("/content material/Screenshot_table.png")

print(outcome)

Outcome:

Dialogue: This technique labored and accurately extracted the blurry desk. For tables the place OCR may need hassle getting an correct recognition, VLMs can fill within the hole. This can be a highly effective approach, however the challenges we talked about earlier within the article nonetheless apply to VLMs. There isn’t any assure of constant extractions, there’s danger of hallucination, immediate engineering might be required, and VLMs are nonetheless black field fashions.

Current Developments in VLMs

As you’ll be able to inform, VLMs would be the subsequent logical step to LLMs the place on prime of textual content, the mannequin can even course of pictures. Given the huge nature of the sector, we’ve got devoted a complete article summarizing the important thing insights and takeaways.

Bridging Photographs and Textual content: A Survey of VLMs

Dive into the world of Imaginative and prescient-Language Fashions (VLMs) and discover how they bridge the hole between pictures and textual content. Study extra about their functions, developments, and future traits.

To summarize, VLMs are hybrids of imaginative and prescient fashions and LLMs that attempt to align picture inputs with textual content inputs to carry out all of the duties that LLMs. Though there are dozens of dependable architectures and fashions out there as of now, increasingly fashions are being launched on a weekly foundation and we’re but to see a stagnation when it comes to discipline’s true capabilities.

Cognizant to the drawbacks of LLMs, Nanonets has used a number of guardrails to make sure the extracted tables are correct and dependable.

- We convert the OCR output right into a wealthy textual content format to assist the LLM perceive the construction and placement of content material within the authentic doc.

- The wealthy textual content clearly highlights all of the required fields, guaranteeing the LLM can simply distinguish between the content material and the specified info.

- All of the prompts have been meticulously engineered to attenuate hallucinations

- We embrace validations each inside the immediate and after the predictions to make sure that the extracted fields are at all times correct and significant.

- In instances of difficult and exhausting to decipher layouts, nanonets has mechanisims to assist the LLM with examples to spice up the accuracy.

- Nanonets has devised algorithms to infer LLMs correctness and reliably give low confidence to predictions the place LLM could be hallucinating.

Convert Photographs to Excel in Seconds

Effortlessly extract tables from pictures with Nanonets’ Picture-to-Excel instrument. Robotically convert monetary statements, invoices, and extra into editable Excel sheets with unmatched precision and bulk processing.

Nanonets affords a flexible and highly effective strategy to desk extraction, leveraging superior AI applied sciences to cater to a variety of doc processing wants. Their answer stands out for its flexibility and complete characteristic set, addressing numerous challenges in doc evaluation and knowledge extraction.

- Zero-Coaching AI Extraction: Nanonets gives pre-trained fashions able to extracting knowledge from frequent doc varieties with out requiring extra coaching. This out-of-the-box performance permits for fast deployment in lots of eventualities, saving time and sources.

- Customized Mannequin Coaching: Nanonets affords the power to coach customized fashions. Customers can fine-tune extraction processes on their particular doc varieties, enhancing accuracy for explicit use instances.

- Full-Textual content OCR: Past extraction, Nanonets incorporates strong Optical Character Recognition (OCR) capabilities, enabling the conversion of total paperwork into machine-readable textual content.

- Pre-trained Fashions for Frequent Paperwork: Nanonets affords a library of pre-trained fashions optimized for continuously encountered doc varieties akin to receipts and invoices.

- Versatile Desk Extraction: The platform helps each automated and guide desk extraction. Whereas AI-driven automated extraction handles most instances, the guide choice permits for human intervention in advanced or ambiguous eventualities, guaranteeing accuracy and management.

- Doc Classification: Nanonets can mechanically categorize incoming paperwork, streamlining workflows by routing totally different doc varieties to acceptable processing pipelines.

- Customized Extraction Workflows: Customers can create tailor-made doc extraction workflows, combining numerous options like classification, OCR, and desk extraction to go well with particular enterprise processes.

- Minimal and No Code Setup: In contrast to conventional strategies which will require putting in and configuring a number of libraries or organising advanced environments, Nanonets affords a cloud-based answer that may be accessed and carried out with minimal setup. This reduces the time and technical experience wanted to get began. Customers can usually prepare customized fashions by merely importing pattern paperwork and annotating them by way of the interface.

- Consumer-Pleasant Interface: Nanonets gives an intuitive internet interface for a lot of duties, lowering the necessity for intensive coding. This makes it accessible to non-technical customers who may wrestle with code-heavy options.

- Fast Deployment & Low Technical Debt: Pre-trained fashions, straightforward retraining, and configuration-based updates enable for speedy scaling with no need intensive coding or system redesigns.

By addressing these frequent ache factors, Nanonets affords a extra accessible and environment friendly strategy to desk extraction and doc processing. This may be notably priceless for organizations seeking to implement these capabilities with out investing in intensive technical sources or enduring lengthy improvement cycles.

Conclusion

The panorama of desk extraction know-how is present process a big transformation with the applying of LLMs and different AI pushed instruments like Nanonets. Our overview has highlighted a number of key insights:

- Conventional strategies, whereas nonetheless priceless and are confirmed for easy extractions, can wrestle with advanced and various desk codecs, particularly in unstructured paperwork.

- LLMs have demonstrated versatile capabilities in understanding context, adapting to numerous desk constructions, and in some instances can extract knowledge with improved accuracy and suppleness.

- Whereas LLMs can current distinctive benefits to desk extraction akin to contextual understanding, they aren’t as constant as tried and true OCR strategies. It’s doubtless a hybrid strategy is the proper path.

- Instruments like Nanonets are pushing the boundaries of what is potential in automated desk extraction, providing options that vary from zero-training fashions to extremely customizable workflows.

Rising traits and areas for additional analysis embrace:

- The event of extra specialised LLMs tailor-made particularly for desk extraction duties and wonderful tuned for domain-specific use-cases and terminology.

- Enhanced strategies for combining conventional OCR with LLM-based approaches in hybrid programs.

- Developments in VLMs, lowering reliance on OCR accuracy.

It’s also necessary to know that the way forward for desk extraction lies within the mixture of AI capabilities alongside human experience. Whereas AI can deal with more and more advanced extraction duties, there are inconsistencies in these AI extractions and we noticed within the demonstration part of this text.

General, LLMs on the very least supply us a instrument to enhance and analyze desk extractions. On the level of writing this text, the very best strategy is probably going combining conventional OCR and AI applied sciences for prime extraction capabilities. Nevertheless, needless to say this panorama adjustments shortly and LLM/VLM capabilities will proceed to enhance. Being ready to adapt extraction methods will proceed to be forefront in knowledge processing and analytics.