💡

Introduction

For those who’re starting your journey into the world of Imaginative and prescient Language Fashions (VLMs), you’re getting into an thrilling and quickly evolving subject that bridges the hole between visible and textual knowledge. On the best way to totally combine VLMs into what you are promoting, there are roughly three phases you want to undergo.

Selecting the Proper Imaginative and prescient Language Mannequin (VLM) for Your Enterprise Wants

Deciding on the best VLM to your particular use case is crucial to unlocking its full potential and driving success for what you are promoting. A comprehensive survey of available models, might help you navigate the big range of choices, offering a strong basis to know their strengths and functions.

Figuring out the Greatest VLM for Your Dataset

When you’ve surveyed the panorama, the subsequent problem is figuring out which VLM most accurately fits your dataset and particular necessities. Whether or not you’re targeted on structured knowledge extraction, data retrieval, or one other process, narrowing down the best mannequin is vital. For those who’re nonetheless on this section, this guide on selecting the right VLM for data extraction affords sensible insights that will help you make the only option to your undertaking.

Advantageous-Tuning Your Imaginative and prescient Language Mannequin

Now that you just’ve chosen a VLM, the actual work begins: fine-tuning. Advantageous-tuning your mannequin is crucial to reaching the perfect efficiency in your dataset. This course of ensures that the VLM is just not solely able to dealing with your particular knowledge but additionally improves its generalization capabilities, in the end resulting in extra correct and dependable outcomes. By customizing the mannequin to your specific wants, you set the stage for achievement in your VLM-powered functions.

On this article, we’ll dive into the several types of fine-tuning strategies accessible for Imaginative and prescient Language Fashions (VLMs), exploring when and the right way to apply every strategy primarily based in your particular use case. We’ll stroll by way of organising the code for fine-tuning, offering a step-by-step information to make sure you can seamlessly adapt your mannequin to your knowledge. Alongside the best way, we’ll talk about necessary hyperparameters—comparable to studying charge, batch dimension, and weight decay—that may considerably impression the result of your fine-tuning course of. Moreover, we’ll visualize the outcomes of 1 such fine-tuning exercise, evaluating the previous, pre-fine-tuned outcomes from a earlier put up to the brand new, improved outputs after fine-tuning. Lastly, we’ll wrap up with key takeaways and greatest practices to remember all through the fine-tuning course of, guaranteeing you obtain the very best efficiency out of your VLM.

Sorts of Advantageous-Tuning

Advantageous-tuning is a vital course of in machine studying, significantly within the context of switch studying, the place pre-trained fashions are tailored to new duties. Two major approaches to fine-tuning are LoRA (Low-Rank Adaptation) and Full Mannequin Advantageous-Tuning. Understanding the strengths and limitations of every might help you make knowledgeable choices tailor-made to your undertaking’s wants.

LoRA (Low-Rank Adaptation)

LoRA is an modern technique designed to optimize the fine-tuning course of. Listed here are some key options and advantages:

• Effectivity: LoRA focuses on modifying solely a small variety of parameters in particular layers of the mannequin. This implies you possibly can obtain good efficiency with out the necessity for large computational assets, making it ultimate for environments the place assets are restricted.

• Velocity: Since LoRA fine-tunes fewer parameters, the coaching course of is usually quicker in comparison with full mannequin fine-tuning. This enables for faster iterations and experiments, particularly helpful in speedy prototyping phases.

• Parameter Effectivity: LoRA introduces low-rank updates, which helps in retaining the data from the unique mannequin whereas adapting to new duties. This stability ensures that the mannequin doesn’t overlook beforehand discovered data (a phenomenon referred to as catastrophic forgetting).

• Use Circumstances: LoRA is especially efficient in situations with restricted labeled knowledge or the place the computational funds is constrained, comparable to in cell functions or edge units. It’s additionally helpful for giant language fashions (LLMs) and imaginative and prescient fashions in specialised domains.

Full Mannequin Advantageous-Tuning

Full mannequin fine-tuning includes adjusting all the set of parameters of a pre-trained mannequin. Listed here are the principle points to contemplate:

• Useful resource Depth: This strategy requires considerably extra computational energy and reminiscence, because it modifies all layers of the mannequin. Relying on the dimensions of the mannequin and dataset, this can lead to longer coaching instances and the necessity for high-performance {hardware}.

• Potential for Higher Outcomes: By adjusting all parameters, full mannequin fine-tuning can result in improved efficiency, particularly when you may have a big, numerous dataset. This technique permits the mannequin to totally adapt to the specifics of your new process, probably leading to superior accuracy and robustness.

• Flexibility: Full mannequin fine-tuning could be utilized to a broader vary of duties and knowledge sorts. It’s typically the go-to selection when there’s ample labeled knowledge accessible for coaching.

• Use Circumstances: Perfect for situations the place accuracy is paramount and the accessible computational assets are adequate, comparable to in large-scale enterprise functions or educational analysis with intensive datasets.

Immediate, Prefix and P Tuning

These three strategies are used to establish the perfect prompts to your specific process. Principally, we add learnable immediate embeddings to an enter immediate and replace them primarily based on the specified process loss. These strategies helps one to generate extra optimized prompts for the duty with out coaching the mannequin.

E.g., In prompt-tuning, for sentiment evaluation the immediate could be adjusted from “Classify the sentiment of the next evaluation:” to “[SOFT-PROMPT-1] Classify the sentiment of the next evaluation: [SOFT-PROMPT-2]” the place the soft-prompts are embeddings which haven’t any actual world significance however will give a stronger sign to the LLM that person is on the lookout for sentiment classification, eradicating any ambiguity within the enter. Prefix and P-Tuning are considerably variations on the identical idea the place Prefix tuning interacts extra deeply with the mannequin’s hidden states as a result of it’s processed by the mannequin’s layers and P-Tuning makes use of extra steady representations for tokens

Quantization-Conscious Coaching

That is an orthogonal idea the place both full-finetuning or adapter-finetuning happen in decrease precision, decreasing reminiscence and compute. QLoRA is one such instance.

Combination of Consultants (MoE) Advantageous-tuning

Combination of Consultants (MoE) fine-tuning includes activating a subset of mannequin parameters (or specialists) for every enter, permitting for environment friendly useful resource utilization and improved efficiency on particular duties. In MoE architectures, only some specialists are educated and activated for a given process, resulting in a light-weight mannequin that may scale whereas sustaining excessive accuracy. This strategy allows the mannequin to adaptively leverage specialised capabilities of various specialists, enhancing its potential to generalize throughout varied duties whereas decreasing computational prices.

Issues for Selecting a Advantageous-Tuning Strategy

When deciding between LoRA and full mannequin fine-tuning, take into account the next elements:

- Computational Assets: Assess the {hardware} you may have accessible. If you’re restricted in reminiscence or processing energy, LoRA will be the better option.

- Information Availability: In case you have a small dataset, LoRA’s effectivity would possibly show you how to keep away from overfitting. Conversely, when you’ve got a big, wealthy dataset, full mannequin fine-tuning might exploit that knowledge absolutely.

- Venture Objectives: Outline what you intention to realize. If speedy iteration and deployment are essential, LoRA’s pace and effectivity could also be helpful. If reaching the very best potential efficiency is your major aim, take into account full mannequin fine-tuning.

- Area Specificity: In specialised domains the place the nuances are vital, full mannequin fine-tuning might present the depth of adjustment essential to seize these subtleties.

- Overfitting: It is very important keep watch over validation loss to make sure that the we aren’t over studying on the coaching knowledge

- Catastrophic Forgetting: This refers back to the phenomenon the place a neural community forgets beforehand discovered data upon being educated on new knowledge, resulting in a decline in efficiency on earlier duties. That is additionally known as as Bias Amplification or Over Specialization primarily based on context. Though much like overfitting, catastrophic forgetting can happen even when a mannequin performs nicely on the present validation dataset.

To Summarize –

Immediate tuning, LoRA and full mannequin fine-tuning have their distinctive benefits and are suited to completely different situations. Understanding the necessities of your undertaking and the assets at your disposal will information you in choosing probably the most applicable fine-tuning technique. In the end, the selection ought to align along with your objectives, whether or not that’s reaching effectivity in coaching or maximizing the mannequin’s efficiency to your particular process.

As per the previous article, we’ve seen that Qwen2 mannequin has given the perfect accuracies throughout knowledge extraction. Persevering with the move, within the subsequent part, we’re going to fine-tune Qwen2 mannequin on CORD dataset utilizing LoRA finetuning.

Setting Up for Advantageous-Tuning

Step 1: Obtain LLama-Manufacturing unit

To streamline the fine-tuning course of, you’ll want LLama-Manufacturing unit. This instrument is designed for environment friendly coaching of VLMs.

git clone https://github.com/hiyouga/LLaMA-Manufacturing unit/ /dwelling/paperspace/LLaMA-Manufacturing unit/Cloning the Coaching Repo

Step 2: Create the Dataset

Format your dataset accurately. instance of dataset formatting comes from ShareGPT, which offers a ready-to-use template. Make sure that your knowledge is in an identical format in order that it may be processed accurately by the VLM.

ShareGPT’s format deviates from huggingface format by having all the floor reality current inside a single JSON file –

[

{

"messages": [

{

"content": "<QUESTION>n<image>",

"role": "user"

},

{

"content": "<ANSWER>",

"role": "assistant"

}

],

"photos": [

"/home/paperspace/Data/cord-images//0.jpeg"

]

},

...

...

]ShareGPT format

Creating the dataset on this format is only a query of iterating the huggingface dataset and consolidating all the bottom truths into one json like so –

# https://github.com/NanoNets/hands-on-vision-language-models/blob/most important/src/vlm/knowledge/twine.py

cli = Typer()

immediate = """Extract the next knowledge from given picture -

For tables I would like a json of record of

dictionaries of following keys per dict (one dict per line)

'nm', # identify of the merchandise

'worth', # whole worth of all of the gadgets mixed

'cnt', # amount of the merchandise

'unitprice' # worth of a single igem

For sub-total I would like a single json of

{'subtotal_price', 'tax_price'}

For whole I would like a single json of

{'total_price', 'cashprice', 'changeprice'}

the ultimate output ought to appear like and have to be JSON parsable

{

"menu": [

{"nm": ..., "price": ..., "cnt": ..., "unitprice": ...}

...

],

"subtotal": {"subtotal_price": ..., "tax_price": ...},

"whole": {"total_price": ..., "cashprice": ..., "changeprice": ...}

}

If a subject is lacking,

merely omit the important thing from the dictionary. Don't infer.

Return solely these values which might be current within the picture.

this is applicable to highlevel keys as nicely, i.e., menu, subtotal and whole

"""

def load_cord(break up="check"):

ds = load_dataset("naver-clova-ix/cord-v2", break up=break up)

return ds

def make_message(im, merchandise):

content material = json.dumps(load_gt(merchandise).d)

message = {

"messages": [

{"content": f"{prompt}<image>", "role": "user"},

{"content": content, "role": "assistant"},

],

"photos": [im],

}

return message

@cli.command()

def save_cord_dataset_in_sharegpt_format(save_to: P):

save_to = P(save_to)

twine = load_cord(break up="prepare")

messages = []

makedir(save_to)

image_root = f"{guardian(save_to)}/photos/"

for ix, merchandise in E(track2(twine)):

im = merchandise["image"]

to = f"{image_root}/{ix}.jpeg"

if not exists(to):

makedir(guardian(to))

im.save(to)

message = make_message(to, merchandise)

messages.append(message)

return write_json(messages, save_to / "knowledge.json")Related Code to Generate Information in ShareGPT format

As soon as the cli command is in place – You’ll be able to create the dataset anyplace in your disk.

vlm save-cord-dataset-in-sharegpt-format /dwelling/paperspace/Information/twine/Step 3: Register the Dataset

Llama-Manufacturing unit must know the place the dataset exists on the disk together with the dataset format and the names of keys within the json. For this we’ve to change the knowledge/dataset_info.json json file within the Llama-Manufacturing unit repo, like so –

from torch_snippets import read_json, write_json

dataset_info = '/dwelling/paperspace/LLaMA-Manufacturing unit/knowledge/dataset_info.json'

js = read_json(dataset_info)

js['cord'] = {

"file_name": "/dwelling/paperspace/Information/twine/knowledge.json",

"formatting": "sharegpt",

"columns": {

"messages": "messages",

"photos": "photos"

},

"tags": {

"role_tag": "function",

"content_tag": "content material",

"user_tag": "person",

"assistant_tag": "assistant"

}

}

write_json(js, dataset_info)Add particulars in regards to the newly created CORD dataset

Step 4: Set Hyperparameters

Hyperparameters are the settings that may govern how your mannequin learns. Typical hyperparameters embrace the training charge, batch dimension, and variety of epochs. These might require fine-tuning themselves because the mannequin trains.

### mannequin

model_name_or_path: Qwen/Qwen2-VL-2B-Instruct

### technique

stage: sft

do_train: true

finetuning_type: lora

lora_target: all

### dataset

dataset: twine

template: qwen2_vl

cutoff_len: 1024

max_samples: 1000

overwrite_cache: true

preprocessing_num_workers: 16

### output

output_dir: saves/cord-4/qwen2_vl-2b/lora/sft

logging_steps: 10

save_steps: 500

plot_loss: true

overwrite_output_dir: true

### prepare

per_device_train_batch_size: 8

gradient_accumulation_steps: 8

learning_rate: 1.0e-4

num_train_epochs: 10.0

lr_scheduler_type: cosine

warmup_ratio: 0.1

bf16: true

ddp_timeout: 180000000

### eval

val_size: 0.1

per_device_eval_batch_size: 1

eval_strategy: steps

eval_steps: 500Add a brand new file in examples/train_lora/twine.yaml in LLama-Manufacturing unit

We start by specifying the bottom mannequin, Qwen/Qwen2-VL-2B-Instruct. As talked about above, we’re utilizing Qwen2 as our place to begin depicted by the variable model_name_or_path

Our technique includes fine-tuning with LoRA (Low-Rank Adaptation), specializing in all layers of the mannequin. LoRA is an environment friendly finetuning technique, permitting us to coach with fewer assets whereas sustaining mannequin efficiency. This strategy is especially helpful for our structured knowledge extraction process utilizing the CORD dataset.

Cutoff size is used to restrict the transformer’s context size. Datasets the place examples have very giant questions/solutions (or each) want a bigger cutoff size and in flip want a bigger GPU VRAM. Within the case of CORD the max size of query and solutions is just not greater than 1024 so we use it to filter any anomalies that could be current in a single or two examples.

We’re leveraging 16 preprocessing employees to optimize knowledge dealing with. The cache is overwritten every time for consistency throughout runs.

Coaching particulars are additionally optimized for efficiency. A batch dimension of 8 per gadget, mixed with gradient accumulation steps set to eight, permits us to successfully simulate a bigger batch dimension of 64 examples per batch. The educational charge of 1e-4 and cosine studying charge scheduler with a warmup ratio of 0.1 assist the mannequin steadily modify throughout coaching.

10 is an efficient place to begin for the variety of epochs since our dataset has solely 800 examples. Normally, we intention for something between 10,000 to 100,000 whole coaching samples primarily based on the variations within the picture. As per above configuration, we’re going with 10×800 coaching samples. If the dataset is simply too giant and we have to prepare solely on a fraction of it, we are able to both cut back the num_train_epochs to a fraction or cut back the max_samples to a smaller quantity.

Analysis is built-in into the workflow with a ten% validation break up, evaluated each 500 steps to observe progress. This technique ensures that we are able to observe efficiency throughout coaching and modify parameters if crucial.

Step 5: Prepare the Adapter Mannequin

As soon as the whole lot is about up, it’s time to start out the coaching course of. Since we’re specializing in fine-tuning, we’ll be utilizing an adapter mannequin, which integrates with the prevailing VLM and permits for particular layer updates with out retraining all the mannequin from scratch.

llamafactory-cli prepare examples/train_lora/twine.yamlCoaching for 10 epochs used roughly 20GB of GPU VRAM and ran for about half-hour.

Evaluating the Mannequin

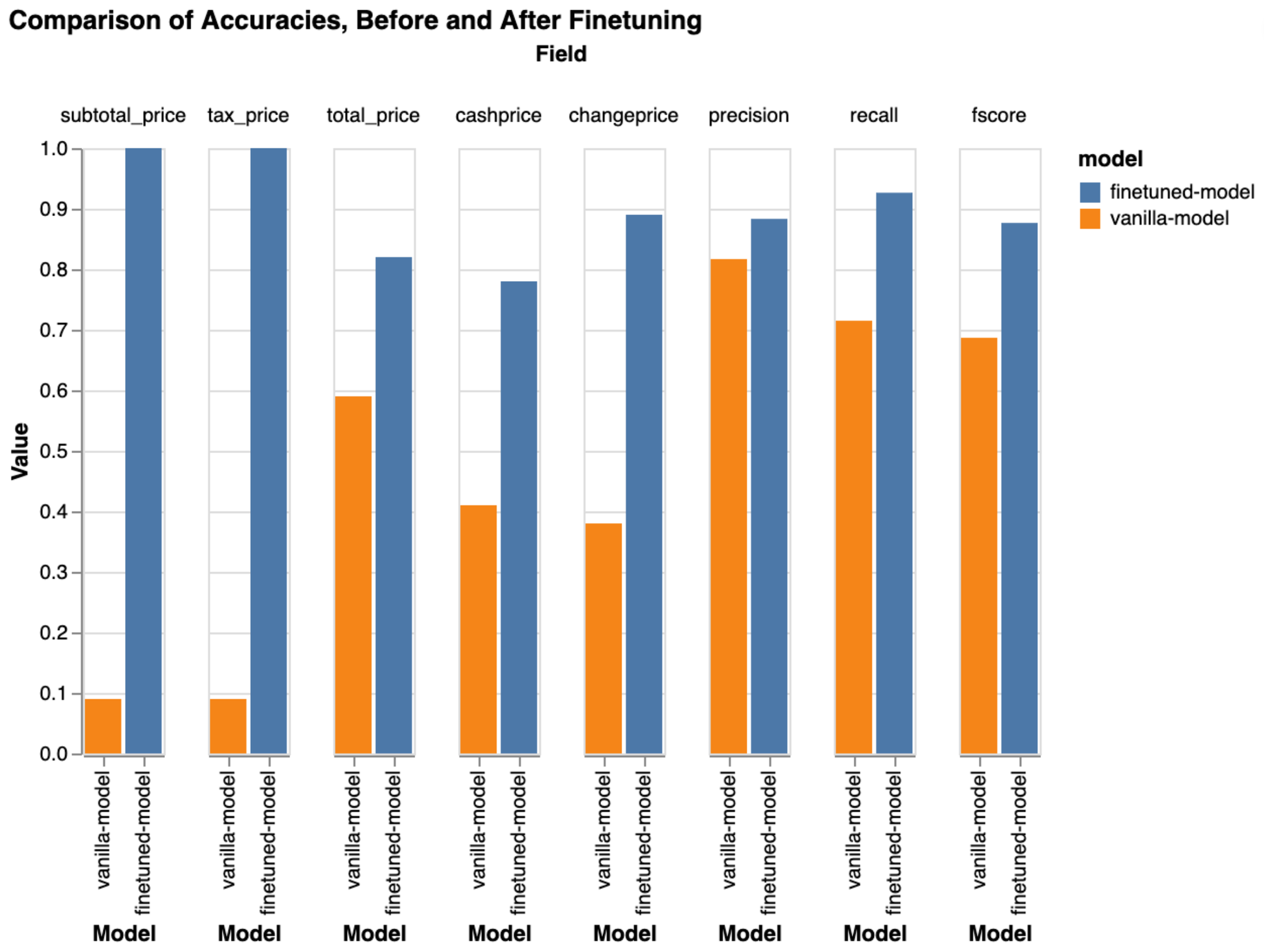

As soon as the mannequin has been fine-tuned, the subsequent step is to run predictions in your dataset to judge how nicely it has tailored. We are going to examine the previous results below the group vanilla-model and the most recent outcomes below the group finetuned-model.

As proven beneath, there’s a appreciable enchancment in lots of key metrics, demonstrating that the brand new adapter has efficiently adjusted to the dataset.

Issues to Hold in Thoughts

- A number of Hyperparameter Units: Advantageous-tuning is not a one-size-fits-all course of. You will seemingly must experiment with completely different hyperparameter configurations to seek out the optimum setup to your dataset.

- Coaching full mannequin or LoRA: If coaching with an adapter exhibits no important enchancment, it’s logical to change to full mannequin coaching by unfreezing all parameters. This offers better flexibility, growing the probability of studying successfully from the dataset.

- Thorough Testing: You should definitely rigorously check your mannequin at each stage. This consists of utilizing validation units and cross-validation strategies to make sure that your mannequin generalizes nicely to new knowledge.

- Filtering Unhealthy Predictions: Errors in your newly fine-tuned mannequin can reveal underlying points within the predictions. Use these errors to filter out unhealthy predictions and refine your mannequin additional.

- Information/Picture augmentations: Make the most of picture augmentations to develop your dataset, enhancing generalization and bettering fine-tuning efficiency.

Conclusion

Advantageous-tuning a VLM is usually a highly effective technique to improve your mannequin’s efficiency on particular datasets. By fastidiously choosing your tuning technique, setting the best hyperparameters, and totally testing your mannequin, you possibly can considerably enhance its accuracy and reliability.