It has been a wildly busy week in AI information because of OpenAI, together with a controversial blog post from CEO Sam Altman, the wide rollout of Superior Voice Mode, 5GW data center rumors, major staff shake-ups, and dramatic restructuring plans.

However the remainder of the AI world does not march to the identical beat, doing its personal factor and churning out new AI fashions and analysis by the minute. Here is a roundup of another notable AI information from the previous week.

Google Gemini updates

On Tuesday, Google announced updates to its Gemini mannequin lineup, together with the discharge of two new production-ready fashions that iterate on previous releases: Gemini-1.5-Professional-002 and Gemini-1.5-Flash-002. The corporate reported enhancements in general high quality, with notable beneficial properties in math, lengthy context dealing with, and imaginative and prescient duties. Google claims a 7 p.c enhance in efficiency on the MMLU-Pro benchmark and a 20 p.c enchancment in math-related duties. However as you understand, when you’ve been studying Ars Technica for some time, AI usually benchmarks aren’t as useful as we want them to be.

Together with mannequin upgrades, Google launched substantial worth reductions for Gemini 1.5 Professional, chopping enter token prices by 64 p.c and output token prices by 52 p.c for prompts beneath 128,000 tokens. As AI researcher Simon Willison noted on his weblog, “For comparability, GPT-4o is presently $5/[million tokens] enter and $15/m output and Claude 3.5 Sonnet is $3/m enter and $15/m output. Gemini 1.5 Professional was already the most affordable of the frontier fashions and now it is even cheaper.”

Google additionally elevated fee limits, with Gemini 1.5 Flash now supporting 2,000 requests per minute and Gemini 1.5 Professional dealing with 1,000 requests per minute. Google reviews that the most recent fashions provide twice the output velocity and 3 times decrease latency in comparison with earlier variations. These adjustments might make it simpler and more cost effective for builders to construct functions with Gemini than earlier than.

Meta launches Llama 3.2

On Wednesday, Meta announced the discharge of Llama 3.2, a major replace to its open-weights AI mannequin lineup that now we have covered extensively previously. The brand new launch contains vision-capable massive language fashions (LLMs) in 11 billion and 90B parameter sizes, in addition to light-weight text-only fashions of 1B and 3B parameters designed for edge and cellular gadgets. Meta claims the imaginative and prescient fashions are aggressive with main closed-source fashions on picture recognition and visible understanding duties, whereas the smaller fashions reportedly outperform similar-sized opponents on numerous text-based duties.

Willison did some experiments with a number of the smaller 3.2 fashions and reported impressive results for the fashions’ dimension. AI researcher Ethan Mollick showed off operating Llama 3.2 on his iPhone utilizing an app known as PocketPal.

Meta additionally launched the primary official “Llama Stack” distributions, created to simplify growth and deployment throughout totally different environments. As with earlier releases, Meta is making the fashions accessible at no cost obtain, with license restrictions. The brand new fashions assist lengthy context home windows of as much as 128,000 tokens.

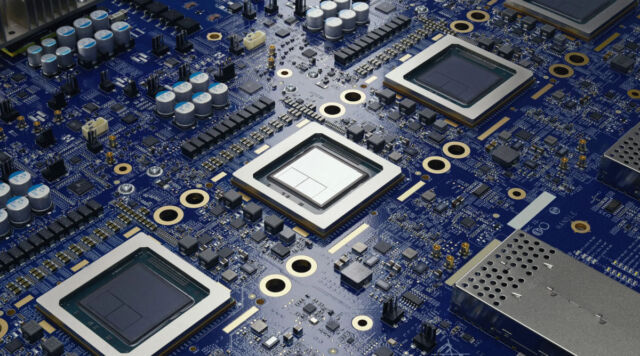

Google’s AlphaChip AI quickens chip design

On Thursday, Google DeepMind announced what seems to be a major development in AI-driven digital chip design, AlphaChip. It started as a research project in 2020 and is now a reinforcement studying technique for designing chip layouts. Google has reportedly used AlphaChip to create “superhuman chip layouts” within the final three generations of its Tensor Processing Units (TPUs), that are chips much like GPUs designed to speed up AI operations. Google claims AlphaChip can generate high-quality chip layouts in hours, in comparison with weeks or months of human effort. (Reportedly, Nvidia has also been using AI to assist design its chips.)

Notably, Google additionally launched a pre-trained checkpoint of AlphaChip on GitHub, sharing the mannequin weights with the general public. The corporate reported that AlphaChip’s affect has already prolonged past Google, with chip design firms like MediaTek adopting and constructing on the expertise for his or her chips. Based on Google, AlphaChip has sparked a brand new line of analysis in AI for chip design, doubtlessly optimizing each stage of the chip design cycle from laptop structure to manufacturing.

That wasn’t every part that occurred, however these are some main highlights. With the AI business displaying no indicators of slowing down for the time being, we’ll see how subsequent week goes.