GPT-4 provided comparable capabilities, giving customers a number of methods to work together with OpenAI’s AI choices. However it siloed them in separate fashions, resulting in longer response occasions and presumably increased computing prices. GPT-4o has now merged these capabilities right into a single mannequin, which Murati referred to as an “omnimodel.” Meaning sooner responses and smoother transitions between duties, she mentioned.

The outcome, the corporate’s demonstration suggests, is a conversational assistant a lot within the vein of Siri or Alexa however able to fielding way more advanced prompts.

“We’re the way forward for interplay between ourselves and the machines,” Murati mentioned of the demo. “We predict that GPT-4o is basically shifting that paradigm into the way forward for collaboration, the place this interplay turns into way more pure.”

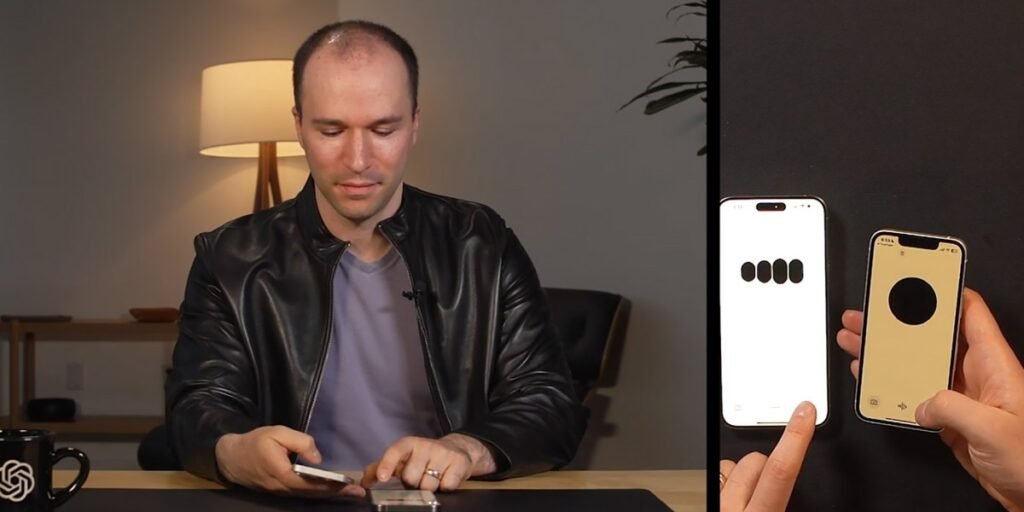

Barret Zoph and Mark Chen, each researchers at OpenAI, walked via a variety of purposes for the brand new mannequin. Most spectacular was its facility with stay dialog. You could possibly interrupt the mannequin throughout its responses, and it will cease, hear, and regulate course.

OpenAI confirmed off the power to vary the mannequin’s tone, too. Chen requested the mannequin to learn a bedtime story “about robots and love,” shortly leaping in to demand a extra dramatic voice. The mannequin acquired progressively extra theatrical till Murati demanded that it pivot shortly to a convincing robotic voice (which it excelled at). Whereas there have been predictably some quick pauses in the course of the dialog whereas the mannequin reasoned via what to say subsequent, it stood out as a remarkably naturally paced AI dialog.